Create 3D Models from Images! GANverse3D & NVIDIA Omniverse

This promising model called GANverse3D only needs an image to create a 3D figure that can be customized and animated!

Watch the video and support me on YouTube!

What you see below is someone carefully creating a scene for a video game. It takes many hours of work by a professional just for a single object like this one.

How cool would it be to take a picture of an object on the internet, let’s say a car, and automatically have the 3D object in less than a second ready to insert in your game? This is cool, right? Well, imagine that within a few seconds, you can even animate this car, making the wheels turn, flashing the lights, etc. Would you believe me if I told you that an AI could already do that? If video games weren’t enough, this new application works for any 3D scene you are working on, illustrations, movies, architecture, design, and more! Removing hundreds if not thousands of hours by professional designers for long and iterative tests, allowing small businesses to produce quick simulations a lot cheaper!

By the time you take your sip of coffee, this model will have processed an image of a car and generated a whole 3D animated version of it with realistic headlights, taillights, and blinkers! Moreover, you can even drive it around in a virtual environment platform like Omniverse, as you can see here.

To introduce this new tool presented in the recent GTC event, Omniverse was designed for creators who rely on virtual environments to test new ideas and visualize prototypes before creating their final products. You can use this tool to simulate complex virtual worlds with real-time ray tracing. Since this article isn’t about Omniverse, which is awesome by itself, I will not dive further into this new platform’s details. I linked more resources about it in the references below.

Here, I want to focus on the algorithm behind the 3D model generation technique NVIDIA published in ICLR and CVPR 2021. Indeed, this promising model called GANverse3D only needs an image to create a 3D figure that can be customized and animated! Just by its name, I think it won’t surprise you if I say that it uses a GAN to achieve that. Here, I won’t enter into how GANs work since I covered it many times in my previous articles.

Generative networks are relatively new in 3D model generation from 2D images, also called “inverse graphics” because of the complexity of the task needing to understand depths, textures, and lighting using multiple viewpoints of an object to generate such an accurate 3D model. Well, the researchers discovered that generative adversarial networks were implicitly acquiring such knowledge during training. Meaning that the information regarding the shapes, lighting, and texture of the objects was already encoded inside the GAN model’s latent code. This latent code is the output of the encoder part of the GAN architecture that is typically sent into a decoder to generate a new image controlling specific attributes.

As observed in previous research, we know that different layers control different attributes within the images, which is why you saw so many different and cool applications using GANs in the past year where some could control the style of the face to generate cartoon images. In contrast, others could make your head move and all this from a single image of yourself.

In this case, they used the well-known StyleGAN architecture, a powerful generator used on many different buzz applications you saw on the internet and my channel. The researchers experimentally found that the first four layers could control the camera viewpoints by fixing the remaining layers. Thus, by manipulating this characteristic of the StyleGAN architecture, they could use these first four layers to automatically generate such novel viewpoints for the rendering task from only one picture! Similarly, as you can see in the first two rows in the image below, doing the opposite and fixing these first four layers, they could produce images of different objects with the same viewpoints.

This characteristic, coupled with different loss functions, could control not only the shape and viewpoints of the images but also the texture and background! This discovery is very innovative since most works on inverse graphics use 3D labels or at least multi-view images of the same object during the training of their rendering network. This type of data is typically difficult to have and thus very limited. These approaches struggle on real photographs because of the domain gap between the training, synthetic, images, and these real images due to this lack of training data. As you can see, it only needs one picture to generate these amazing transformations that look just as real, reducing the need for data annotation by over 10,000 times. Of course, this GAN architecture that generates such important novel viewpoints also needs to be trained on a lot of data to make this possible. Fortunately, it is a lot less costly since it just needs many examples of the object itself and does not require multiple viewpoints of the same picture, but this is still a limitation to what object we can model using this technique.

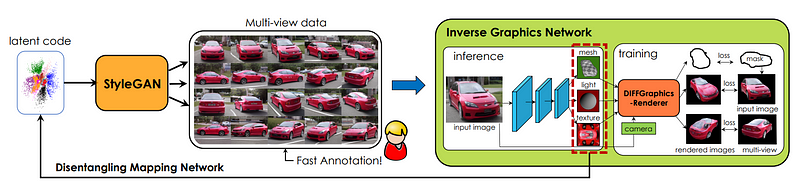

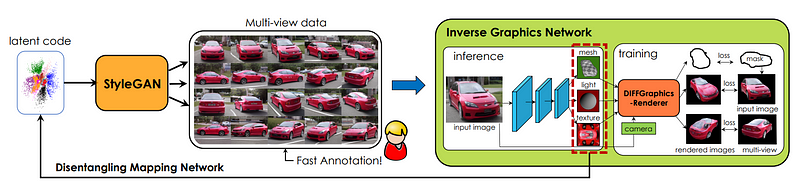

As you can see here, StyleGAN is used as a multi-view generator to build the missing data to train the rendering architecture.

Before going into the renderer, let’s jump back a little to understand the whole process. You can see here that the architecture doesn’t start with a regular image but with a latent code.

This latent code is basically what they learn during training. The CNNs and MLP networks you see here are just basic convolutional neural networks and multi-layer perceptrons used to create a code that disentangles the shape, texture, and background of the image. Meaning that this code will independently contain all these characteristics that will be used in the rendering model. During training, this code is updated to control these features by playing with the different StyleGAN layers, as we just saw.

When you will use this model and send an image, it will pass through the StyleGAN encoder and create the latent code containing all the information we need. Then, this information will be extracted using the disentangling module we just talked about to extract the camera viewpoint, the 3D mesh, texture, and background of your image. These characteristics are individually sent to the renderer producing the final model.

In this architecture, the renderer is a state-of-the-art differentiable renderer called DIB-R, here referred to as DIFFGraphicsRenderer. It is called a differentiable renderer because this technique, also developed by NVIDIA, just like StyleGAN and this very paper, was one of the first to allow the gradient to be analytically computed over the entire images making it possible to train a neural network to generate the 3D shape. You can see that they mainly used state-of-the-art models for each individual task because the overall architecture is much more important and innovative than these models themselves that are already extremely powerful on their own.

This is how this new paper, coupled with NVIDIA’s new 3D platform: Omniverse, will allow architects, creators, game developers, and designers over the world to easily add new animated objects to their mockups without needing any expertise in 3D modeling or a large budget to spend on renderings.

Note that this application currently only exists for cars, horses, and birds because of the amount of data GANs need to perform well, but this is extremely promising. I just want to come back in one year and see how powerful it will have become. Who would’ve thought 10 or 20 years ago that creating a controllable, realistically animated version of your car on your computer screen could take less than one second? And that to do so, it only needed a shiny little gadget in your pocket to take a picture of it and upload it. This is just crazy. I can’t wait to see what researchers will come up with in another 10–20 years!

Come chat with us in our Discord community: Learn AI Together and share your projects, papers, best courses, find Kaggle teammates, and much more!

If you like my work and want to stay up-to-date with AI, you should definitely follow me on my other social media accounts (LinkedIn, Twitter) and subscribe to my weekly AI newsletter!

To support me:

- The best way to support me is by being a member of this website or subscribe to my channel on YouTube if you like the video format.

- Support my work financially on Patreon

References

- Video demo: https://youtu.be/dvjwRBZ3Hnw

- Karras et al., (2019), “StyleGAN”: https://arxiv.org/pdf/1812.04948.pdf

- Chen et al., (2019), “DIB-R”: https://arxiv.org/pdf/1908.01210.pdf

- Omniverse, NVIDIA, (2021): https://www.nvidia.com/en-us/omniverse/

- Zhang et al., (2020), “IMAGE GANS MEET DIFFERENTIABLE RENDERING FOR INVERSE GRAPHICS AND INTERPRETABLE 3D NEURAL RENDERING”: https://arxiv.org/pdf/2010.09125.pdf

- GANverse3D official NVIDIA video: https://youtu.be/0PQnrnUIBlU

- NVIDIA’S GANverse 3D blog article: https://blogs.nvidia.com/blog/2021/04/16/gan-research-knight-rider-ai-omniverse/