How to Spot a Deep Fake. Breakthrough US Army technology (2021)

How to Spot a Deep Fake in 2021. Breakthrough US Army technology using artificial intelligence to find deepfakes.

Watch the video and support me on YouTube!

While they seem like they've always been there, the very first realistic deepfake didn't appear until 2017. It went from these first-ever resembling fake images automatically generated to today's identical copy of someone on videos, with sound.

The reality is that we cannot see the difference between a real video or picture and a deepfake anymore. How can we tell what's real from what isn't? How can audio files or video files be used in court as proof if an AI can entirely generate them? Well, this new paper may provide answers to these questions.

And the answer here may again be the use of artificial intelligence. The saying "I'll believe it when I'll see it" may soon change for "I'll believe it when the AI tells me to believe it..." I will assume that you've all seen deepfakes and know a little about them. Which will be enough for this article.

For more information about how they are generated, I invite you to watch the video I made explaining deepfakes just below, as this video will focus on how to spot them.

More precisely, I will cover a new paper by the USA DEVCOM Army Research Laboratory entitled "DEFAKEHOP: A LIGHT-WEIGHT HIGH-PERFORMANCE DEEPFAKE DETECTOR."

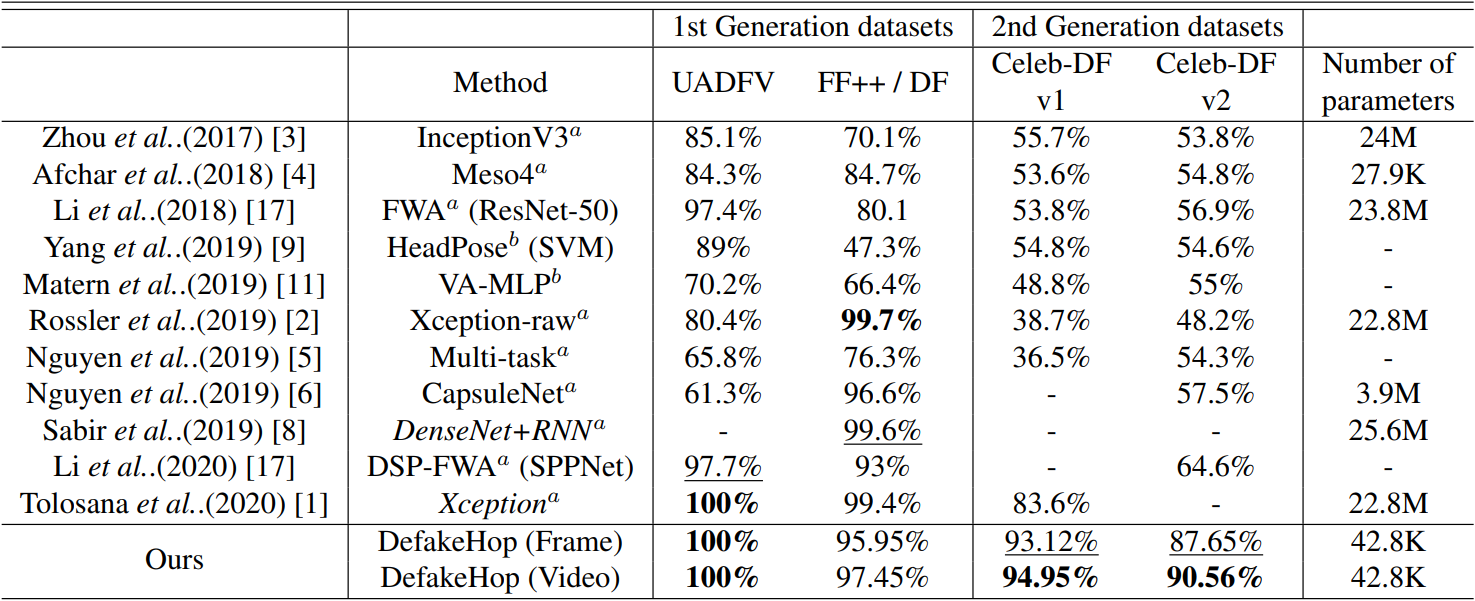

Indeed, they can detect deepfakes with over 90% accuracy in all datasets and even reach 100% accuracy in some benchmark datasets. What is even more incredible is the size of their detection model. As you can see, this DeFakeHop model has merely 40 thousand parameters, whereas the other techniques yielding much worse accuracy had around 20 million! This means that their model is 500 HUNDRED times smaller while outperforming the previous state-of-the-art techniques. This allows the model to quickly run on your mobile phone and allows you to detect deep fakes anywhere.

You may think that you can tell the difference between a real picture or a fake one, but if you remember the study I shared a couple of weeks ago, it clearly showed that around 50 percent of participants failed. It was basically a random guess on whether a picture was fake or not.

There is a website from MIT where you can test your ability to spot deefakes if you'd like to. Having tried it myself, I can say it's pretty fun to do. There are audio files, videos, pictures, etc. The link is in the description below. If you try it, please let me know how well you do! And if you know any other fun apps to test yourself or help research by trying our best to spot deepfakes, please link them in the comments. I'd love to try them out!

Now, if we come back to the paper able to detect them much better than we can, the question is: how is this tiny machine learning model able to achieve that while humans can't?

DeepFakeHop works in four steps.

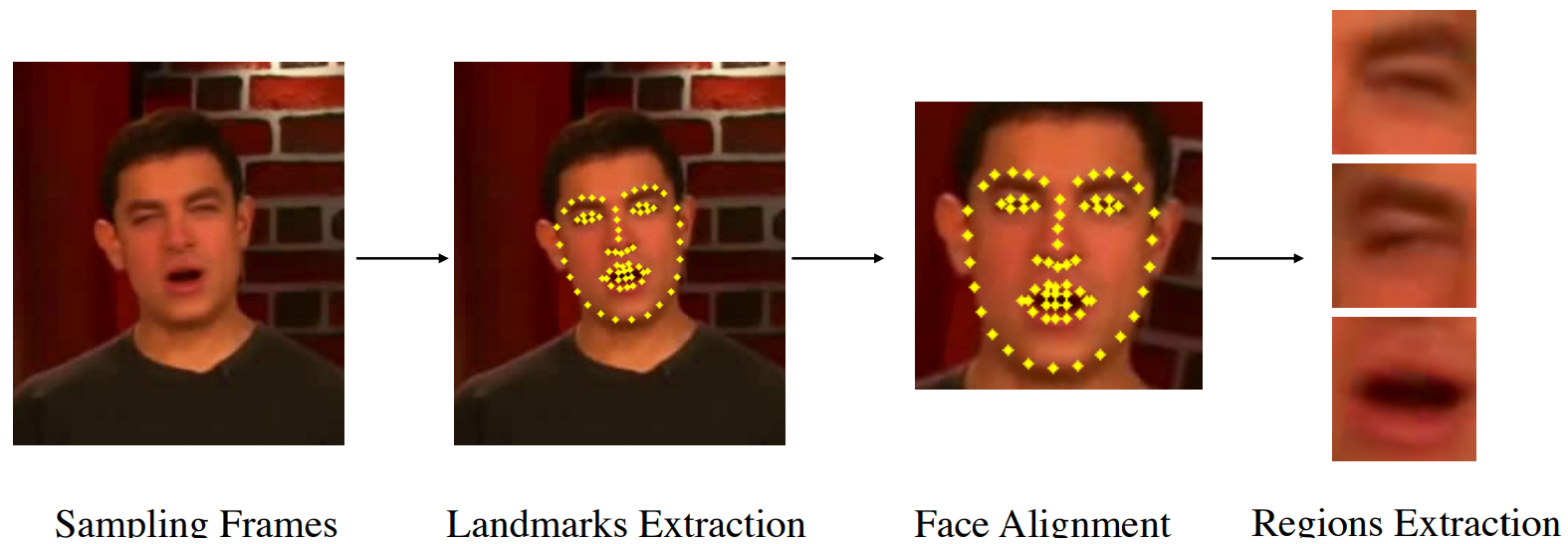

Step 1:

At first, they use another model to extract 68 different facial landmarks from each video frame. These 68 points are extracted to understand where the face is, recenter, orient and resize it to make them more consistent, and then extract specific parts of the face from the image. These are the "patches" of the image we will send our network, containing specific individual face features like the eyes, mouth, nose. It is done using another model called OpenFace 2.0. It can accurately perform facial landmark detection, head pose estimation, facial action unit recognition, and eye-gaze estimation in real-time. These are all tiny patches of 32 by 32 that will all be sent into the actual network one by one. This makes the model super efficient because it deals with only a handful of tiny images instead of the full image. More details about OpenFace2.0 can be found in the references below if you are curious about it.

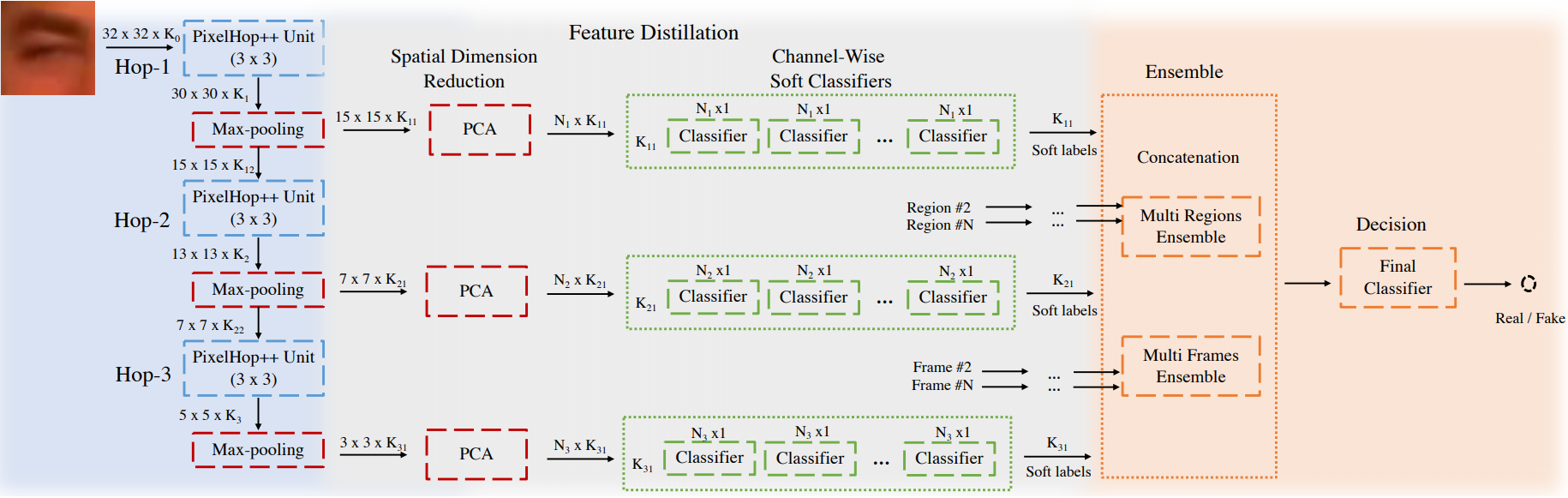

Step 2 to 4 (left to right, blue, green, orange):

More precisely, the patches are sent to the first PixelhHop++ unit named Hop-1, as you can see. Representing the step one in blue. This is an algorithm called Saab transform to reduce the dimension. It will take the 32 by 32 image and reduce it to a downscaled version of the image but with multiple channels representing its response from different filters learned from the Saab transform. You can see the Saab transform as a convolution process, where the kernels are found using the PCA dimension reduction algorithm replacing the need of backpropagation to learn these weights. I will come back to the PCA dimension reduction algorithm in a minute as it is repeated in the next stage. These filters are optimized to represent the different frequencies in the image, basically getting activated by varying degrees of details. The Saab transform was shown to work well against adversarial attacks compared to basic convolutions trained with backpropagation. You can also find more information about the Saab transformation in the references below. If you are not used to how convolutions work, I strongly invite you to watch the video I made introducing them:

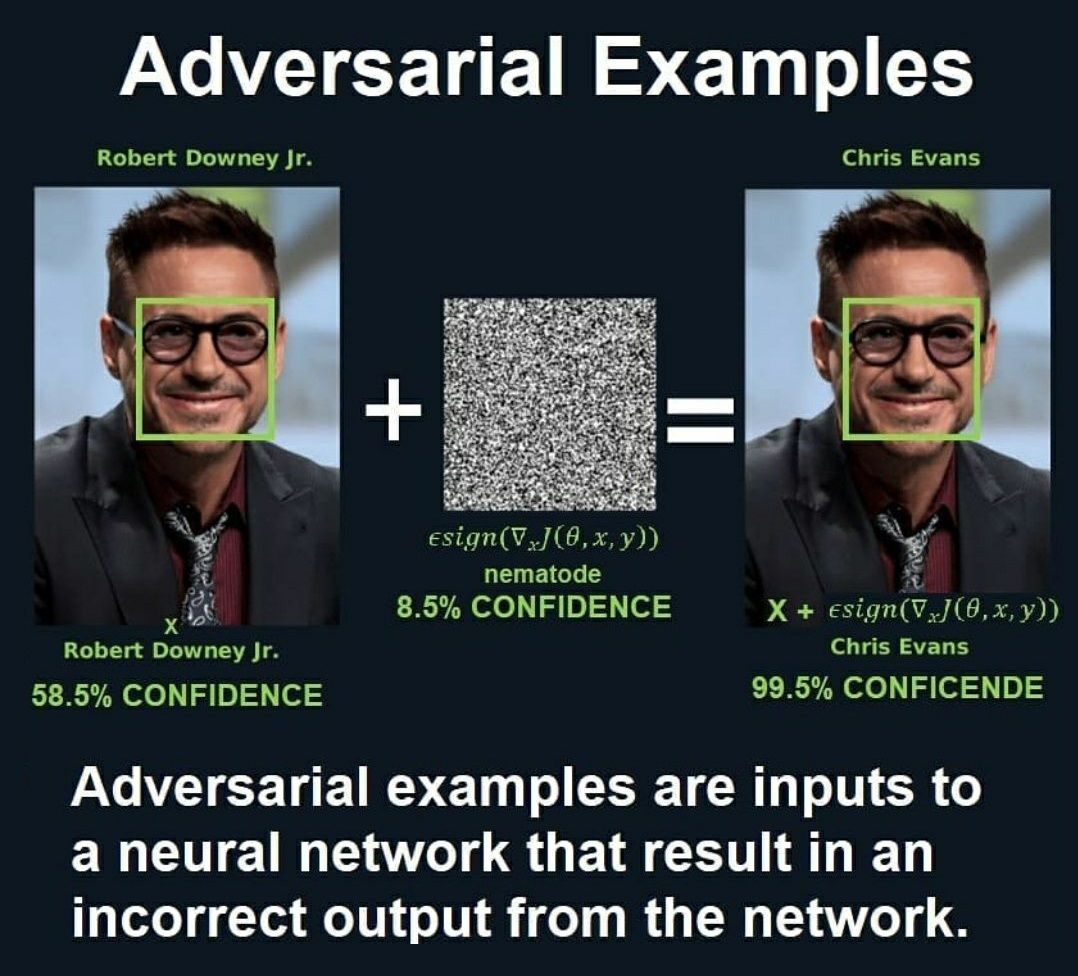

I said Saab transforms worked well on adversarial attacks. These adversarial attacks happen when we "attack" an image by changing a few pixels or adding noise that humans cannot see to change the results of a machine learning model processing the image.

So to simplify, we can basically see this PixelHop++ Unit as a typical 3 by 3 convolution here since we do not look at the training process. Of course, it works a bit differently, but it will make the explanation much more straightforward as the process is comparable. Then, the "Hop" step is repeated three times to get smaller and smaller versions of the image with concentrated general information and more channels. These channels are simply the outputs, or responses, of the input image by filters that react differently depending on the level of detail in the image, as I said earlier. One new channel per filter used.

Thus, we obtain various results giving us precise information about what the image contains, but these results are smaller and smaller containing less spatial details unique to that precise image sent in the network, and therefore have more general and useful information with regard to what the image actually contains. The first few images are still relatively big, starting at 32 by 32, being the initial size of the patch and thus contains all the details. Then, it drops to 15 by 15, and finally to 7 by 7 images, meaning that we have close to zero spatial information in the end.

The 15 by 15 image will just look like a blurry version of the initial image but still contains some spatial information, while the 7 by 7 image will basically be a very general and broad version of the image with close to no spatial information at all.

So just like a convolutional neural network, the deeper we get, the more channels we have meaning that we have more filter responses reacting to different stimuli, but the smaller they each are, ending with images of size 5x5.

Allowing us to have a broader view in many ways, keeping a lot of unique valuable information even with smaller versions of the image.

The images get even smaller because each of the PixelHop units is followed by a max-pooling step.

They are simply taking the maximum value of each square of two by two pixels, reducing the image size by a factor of four at each step.

Then, as you can see in the full model shown above, the outputs from each max-pooling layer are sent for further dimension reduction using the PCA algorithm. Which is the third step, in green. The PCA algorithm mainly takes the current dimensions, for example, 15 by 15 here in the first step, and minimizes that while maintaining at least 90% of the intensity of the input image.

Here is a very simple example of how PCA can reduce the dimension, where two-dimensional points of cats and dogs are reduced to one dimension on a line, allowing us to add a threshold and easily build a classifier. Each hop gives us respectively 45, 30, and 5 parameters per channel instead of having images of size 15 by 15, 7 by 7, and 3 by 3, which would give us in the same order 225, 49, and 9 parameters. This is a much more compact representation while maximizing the quality of information it contains. All these steps were used to compress the information and make the network super fast.

You can see this as squeezing all the helpful juice at different levels of details of the cropped image to finally decide whether it is fake or not, using both detailed and general information in the decision process (step 4 in orange).

I'm glad to see that the research in countering these deepfakes is also advancing, and I'm excited to see what will happen in the future with all that. Let me know in the comments what you think will be the main consequences and concerns regarding deepfakes.

Is it going to affect law, politics, companies, celebrities, ordinary people? Well, pretty much everyone...

Let's have a discussion to share awareness and spread the word to be careful and that we cannot believe what we see anymore, unfortunately. This is both an incredible and dangerous new technology.

Please, do not abuse this technology and stay ethically correct. The goal here is to help improve this technology and not to use it for the wrong reasons.

Come chat with us in our Discord community: Learn AI Together and share your projects, papers, best courses, find Kaggle teammates, and much more!

If you like my work and want to stay up-to-date with AI, you should definitely follow me on my other social media accounts (LinkedIn, Twitter) and subscribe to my weekly AI newsletter!

To support me:

- The best way to support me is by being a member of this website or subscribe to my channel on YouTube if you like the video format.

- Support my work financially on Patreon

References

- Test your deepfake detection capacity: https://detectfakes.media.mit.edu/

- DeepFakeHop: Chen, Hong-Shuo et al., (2021), “DefakeHop: A Light-Weight High-Performance Deepfake Detector.” ArXiv abs/2103.06929

- Saab Transforms: Kuo, C.-C. Jay et al., (2019), “Interpretable Convolutional Neural Networks via Feedforward Design.” J. Vis. Commun. Image Represent.

- OpenFace 2.0: T. Baltrusaitis, A. Zadeh, Y. C. Lim and L. Morency, "OpenFace 2.0: Facial Behavior Analysis Toolkit," 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), 2018, pp. 59-66, doi: 10.1109/FG.2018.00019.