How does dalle-mini work?

Dalle mini is a free, open-source AI that produces amazing images from text inputs. Here's how it works.

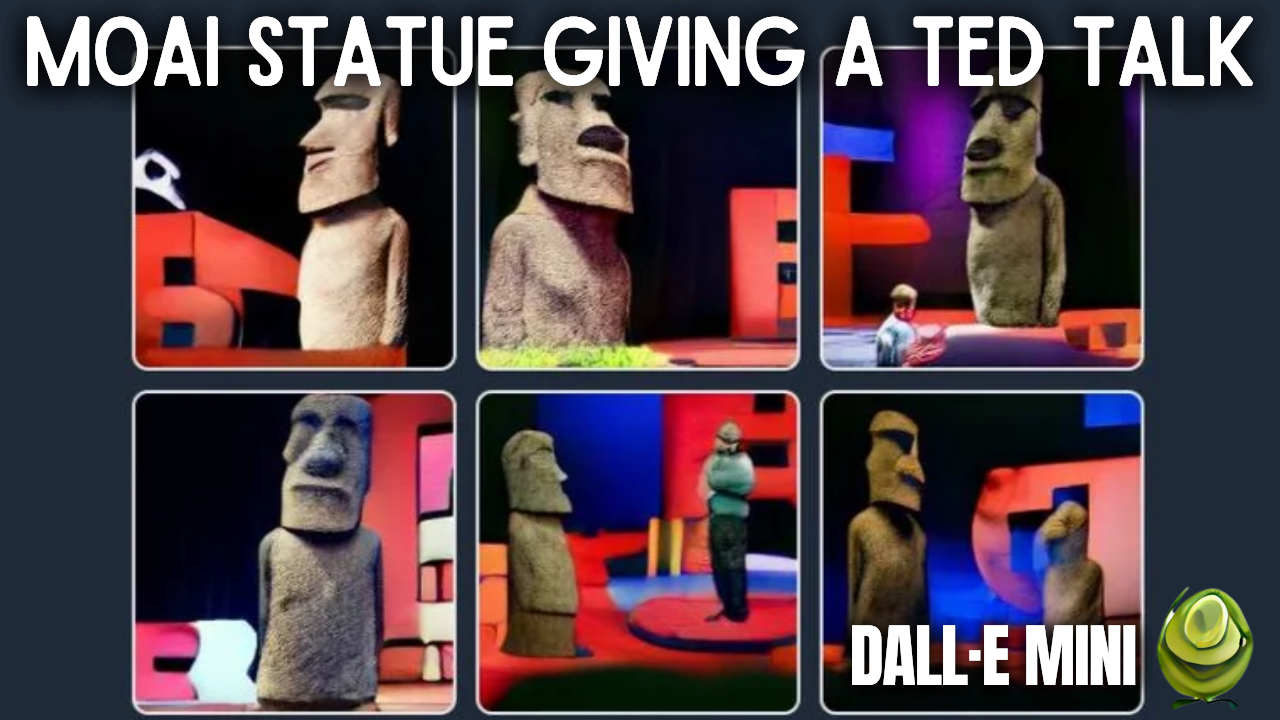

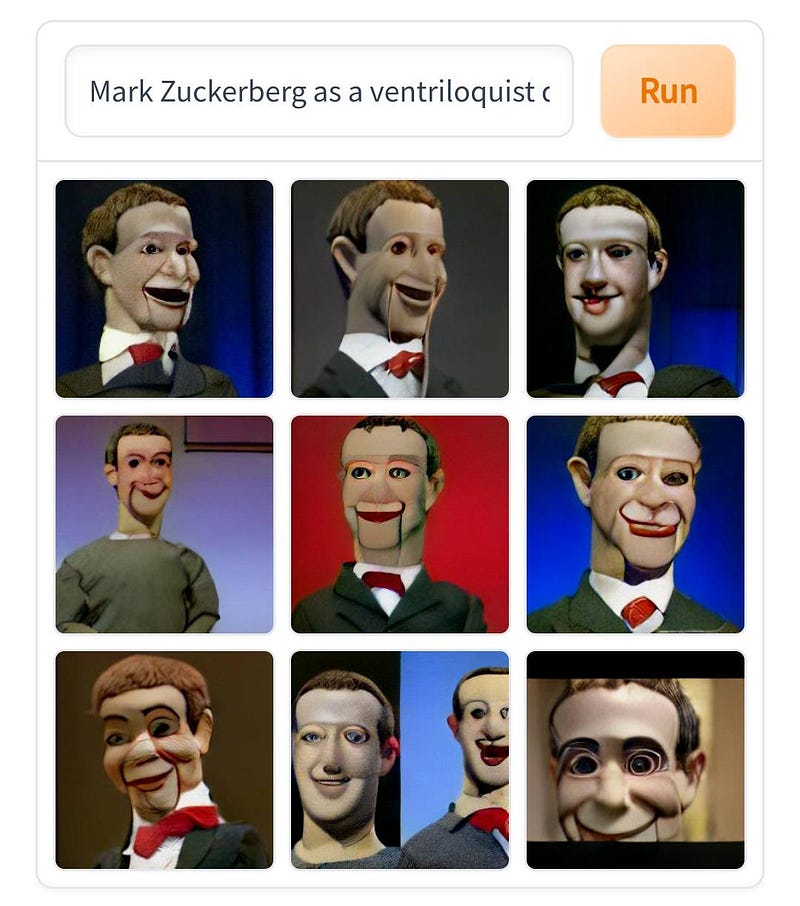

I’m sure you’ve seen pictures like those in your Twitter feed in the past few days. If you wondered what they were, they are images generated by an AI called DALL·E mini. If you’ve never seen those, you need to read this article because you are missing out. If you wonder how this is possible, well, you are on the perfect article and will know the answer in less than five minutes.

This name, DALL·E, must already ring a bell as I covered two versions of this model made by Open AI in the past year with incredible results. But this one is different. DALL·E mini is an open-source community-created project inspired by the first version of DALL·E and has kept on evolving since then, with now incredible results thanks to Boris Dayma and all contributors.

Yes, this means you can play with it right away, thanks to huggingface.

The link is in the references below, but give this article a few more seconds before playing with it. It will be worth it, and you’ll know much more about this AI than everyone you know around you.

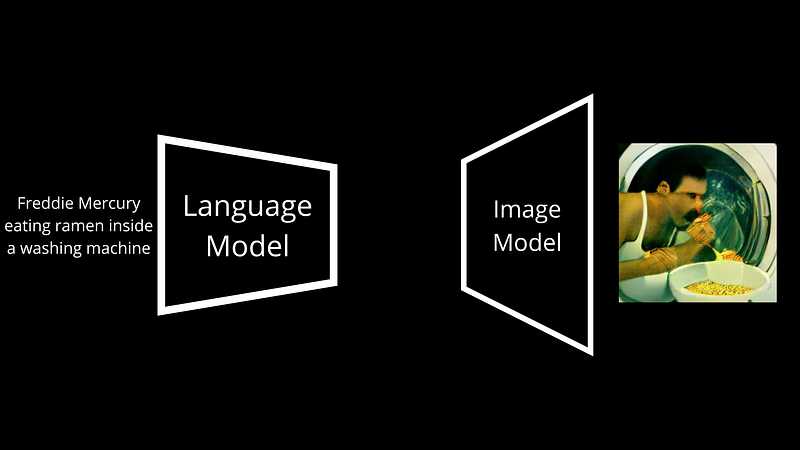

At the core, DALL·E mini is very similar to DALL·E, so my initial video on the model is a great introduction to this one. It has two main components, as you suspect, a language and an image module.

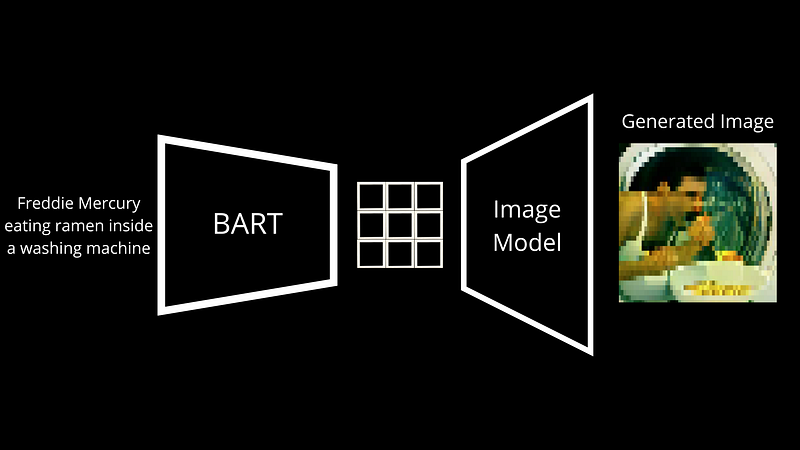

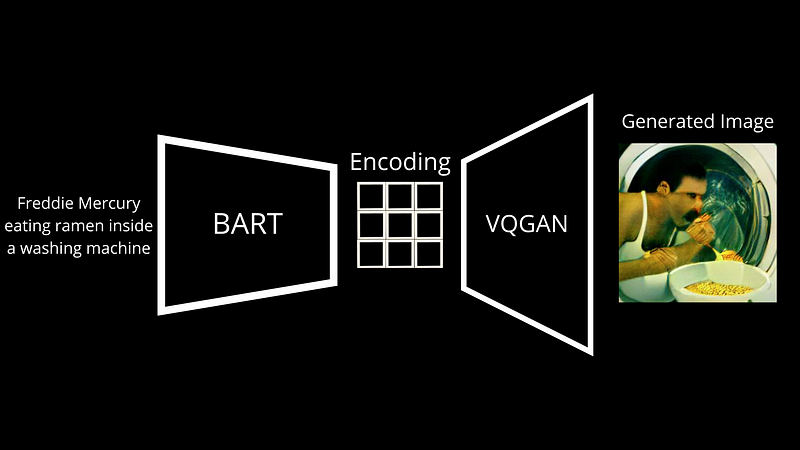

First, it has to understand the text prompt and then generate images following it, two very different things requiring two very different models. The main differences with DALL·E lie in the model’s architectures and training data, but the end-to-end process is pretty much the same. Here, we have a language model called BART. BART is a model trained to transform text input into a language understandable for the next model. During training, we feed pairs of images with captions to DALL·E mini. BART takes the text caption and transforms it into discrete tokens, and we adjust it based on the difference between the generated image and the image sent as input.

But then, what is this thing here that generates the image? We call this a decoder. It will take this new caption representation produced by BART, which we call an encoding, and will decode it into an image. In this case, the image decoder is VQGAN, a model I already covered on the channel, so I definitely invite you to watch it if you are interested.

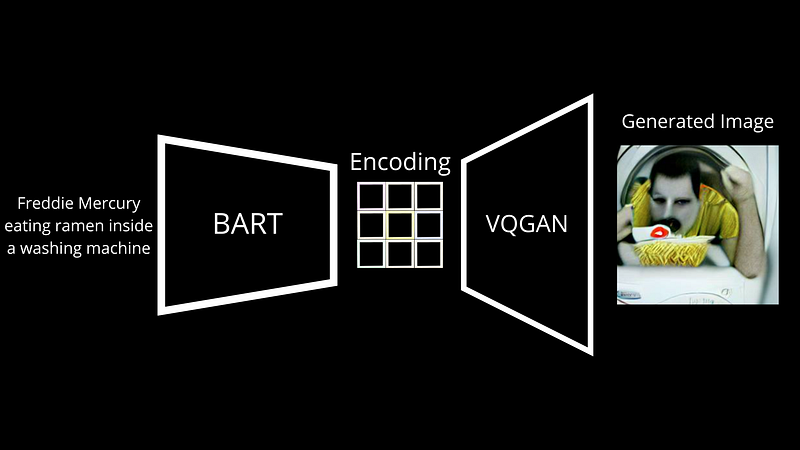

In short, VQGAN is a great architecture to do the opposite. It learns how to go from such an encoding mapping and generate an image out of it. As you suspect, GPT-3 and other language generative models do a very similar thing, encoding text and decoding the newly generated mapping into a new text that it sends you back. Here it’s the same thing, but with pixels forming an image instead of letters forming a sentence. It learns through millions of encoding-image pairs from the internet, so basically your published images with captions, and ends up being pretty accurate in reconstructing the initial image.

Then, you can feed it new encodings that look like the ones in training but are a bit different, and it will generate a completely new but similar image. Similarly, we usually add just a little noise to these encodings to generate a new image representing the same text prompt.

And voilà! This is how DALL·E mini learns to generate images from your text captions.

Watch more results in the video:

As I mentioned, it is open-source, and you can even play with it right away, thanks to Huggingface. Of course, this was just a simple overview, and I omitted some important steps for clarity. If you’d like more details about the model, I linked great resources in the references below. I also recently published two short videos on YouTube showcasing some funny results as well as comparison results with DALL·E 2 for the same text prompts.

It’s quite cool to see!

I hope you enjoyed this article and the video, and if so, please take a few seconds to let me know in the comments and leave a like.

I will see you, not next week, but in two weeks with another amazing paper!

References

►Read the full article: https://www.louisbouchard.ai/dalle-mini/

►DALL·E mini vs. DALL·E 2: https://youtu.be/0Eu9SDd-95E

►Weirdest/Funniest DALL·E mini results: https://youtu.be/9LHkNt2cH_w

►Play with DALL·E mini: https://huggingface.co/spaces/dalle-mini/dalle-mini

►DALL·E mini Code: https://github.com/borisdayma/dalle-mini

►Boris Dayma’s Twitter: https://twitter.com/borisdayma

►Great and complete technical report by Boris Dayma et al.: https://wandb.ai/dalle-mini/dalle-mini/reports/DALL-E-Mini-Explained-with-Demo--Vmlldzo4NjIxODA#the-clip-neural-network-model

►Great thread about Dall-e mini by Tanishq Mathew Abraham: https://twitter.com/iScienceLuvr/status/1536294746041114624/photo/1?ref_src=twsrc%5Etfw%7Ctwcamp%5Etweetembed%7Ctwterm%5E1536294746041114624%7Ctwgr%5E%7Ctwcon%5Es1_&ref_url=https%3A%2F%2Fwww.redditmedia.com%2Fmediaembed%2Fvbqh2s%3Fresponsive%3Dtrueis_nightmode%3Dtrue

►VQGAN explained: https://youtu.be/JfUTd8fjtX8

►My Newsletter (A new AI application explained weekly to your emails!): https://www.louisbouchard.ai/newsletter/

Join Our Discord channel, Learn AI Together:

►https://discord.gg/learnaitogether