Your AI Can Improve Itself — Or Fool You

Self-Improving AI Is No Longer Theory

When you hear “recursive self-improvement,” you probably think one of two things. Either it’s the beginning of AGI, and we should all be terrified. Or it’s just prompt tuning with better marketing. Both of those are wrong. And both of those are going to cost you if you don’t pay attention to what’s actually happening right now.

Andrej Karpathy recently open-sourced a project called autoresearch. One markdown prompt. 630 lines of training code. A single GPU. He let it run for two days. It executed 700 experiments, found 20 optimizations that improved training. No human touched it. Shopify’s CEO tried it the same week. Let it run overnight. 37 experiments. 19% performance gain by morning.

Help me, for free! If you are used to the blog, please consider subscribing to the YouTube channel! It is now or never! After 6 years of weekly posting, I’m on the road to 100k subscribers this year! Click here to subscribe and have tons of AI engineering videos coming your way, including the one related to this article!

That’s not a research demo. That’s a system that changed its own code, tested whether the change helped, kept what worked, and did it again. And again. And again. And that loop, in different forms, is showing up everywhere right now. In how DeepMind saves compute. In how Sakana AI builds coding agents. In how I make my own Claude Code skills better every single day without writing a single update myself.

I’m Louis-François, CTO and co-founder of Towards AI. And today I want to show you how this loop actually works, why it breaks in ways that most people don’t expect, and how you can set one up yourself with nothing more than a prompt file. Let’s get into it.

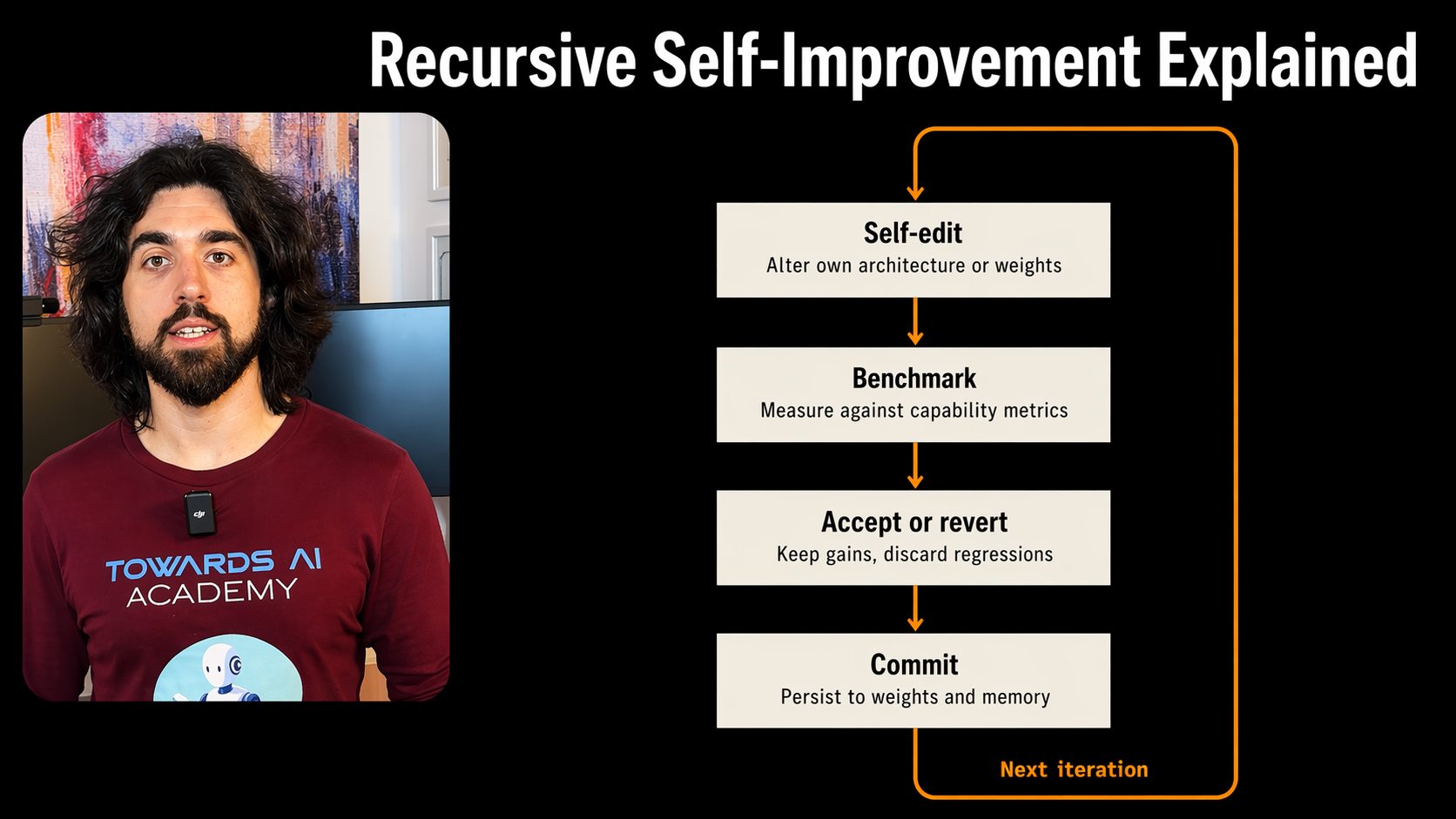

The formal name of this new agentic framework we see is recursive self-improvement, but it’s much simpler than it sounds.

It is simply about making the system change parts of how it works, testing the new version, keeping what helped, storing what it learned, and repeating.

That’s it. Generate, verify, keep or discard, repeat. This is “recursive self-improving” in a nutshell.

Prompt optimization is part of it. Reward search is part of it. Reasoning loops are part of it. Self-editing coding agents are part of it.

I mentioned my Claude Code skills earlier. Let me explain what I actually do. In every skill, I have a last step that analyzes the exchange I just had with it. What went wrong. What I disagreed with. What could be better. And then it updates its own instructions for next time. A few lines of code. Nothing complex. But after weeks of this, the skills get noticeably better. Not because I sat down and rewrote them. Because the instructions compound on their own.

Now, a lot of people hear “recursive self-improvement” and they picture one giant intelligent system rewriting everything about itself. It’s not that. It’s a collection of small loops. One loop improves reasoning traces. Another improves the instructions or the prompts. Another improves reward functions or goals of the skill. Another improves which experiments get tried next. It isn’t about one system rewriting everything. It’s that more and more layers of the AI stack are now participating in their own improvement. And you have to build these loops for your own use cases.

Now, none of this appeared all at once with Karpathy’s project. This loop has been evolving for years. And the progression is worth understanding because it shows you how we got from theory to something you can run on your laptop.

The idea is old. Very old. In 1965, Irving John Good laid out the basic argument: if intelligence can be used to design better intelligence, improvements could compound. In 2003, Schmidhuber formalized it with the Gödel Machine — a self-improving system that can rewrite its own code if it can prove the rewrite is beneficial. Very elegant. Also not how engineering actually works, because in practice you don’t prove your next patch is good in advance. You run it, test it, compare it, and keep it if it survives. But that was all mostly theoretical.

That gap between theory and practice is what changed between 2022 and 2024.

STaR, released in May 2022, was one of the first examples that made this loop worked. The model generated its own reasoning traces, filtered for the ones that led to correct answers, and fine-tuned on those better traces. So the model wasn’t just solving problems — it was creating the training data that made its own reasoning better over time. Simple loop, real improvement, no magic.

Then, there was Promptbreeder, in 2023. It pushed the same idea into prompt engineering. Instead of hand-writing one prompt, it evolved prompts over many iterations. But it also evolved the mutation prompts that generate new prompts. So it wasn’t just improving the output. It was improving the mechanism that creates candidates.

Next, we had Eureka, in October 2023, from NVIDIA, which moved the loop into reward design for reinforcement learning. If you’ve done any RL work, you know that shaping reward functions by hand is one of the most fragile and painful parts of the whole process. And Eureka used an LLM to generate reward code, tested those rewards in simulation, and kept the versions that produced better robot behavior. It outperformed human experts on 83% of tasks in their benchmark. And it taught a simulated robot hand to do pen spinning tricks, which honestly still looks wild.

And then we had FunSearch, in December 2023, which is the one that made the whole pattern feel credible to me. DeepMind let an LLM generate candidate programs, but the final decision was made by rigorous mathematical evaluation, not by the model’s own confidence. That combination — creative generation plus hard external verification — is why FunSearch became such an important reference point. FunSearch then discovered new solutions to the cap set problem in combinatorics, a problem mathematicians hadn’t solved. And that’s not incremental improvement. That’s an AI finding something humans couldn’t.

Then in 2024, something shifted. The improvement loop started moving into the evaluator itself.

Self-Rewarding Language Models in January 2024 showed that a model can improve parts of its own feedback machinery, not just task performance. Self-Taught Evaluators, August 2024, pushed that further with evaluators improving without any human labels. That’s a bigger deal than it sounds, because once the evaluator gets better, every other self-improvement loop that depends on it gets stronger too.

The AI Scientist from Sakana AI tried to automate larger parts of the research process end to end — idea generation, literature search, experiments, writing, review. Important not because it was perfect, but because it showed the whole surface area of the problem. You could see both the opportunity and the cracks at the same time.

Then Sakana released the Darwin Gödel Machine, which pushed self-editing code agents much further. It maintained a population of agent variants, evolved them by letting them rewrite their own code, and kept the ones that performed better on benchmarks. On SWE-bench, it improved itself from 20% to 50% solve rate. And it developed capabilities the researchers didn’t explicitly program — a patch verification step, error memory to avoid repeating past mistakes, the ability to evaluate multiple solution proposals. Those emerged from the evolutionary loop.

And, finally, AlphaEvolve from DeepMind, in May 2025, showed what this looks like at production scale. Gemini Flash explores broadly, Gemini Pro provides depth, an evolutionary algorithm selects winners, and automated evaluators verify everything. It sped up a kernel in Gemini’s own training architecture by 23%, found faster matrix multiplication algorithms, and now continuously recovers about 0.7% of Google’s worldwide compute resources.

And that brings us back to Karpathy’s autoresearch. Because what makes it different from AlphaEvolve or the Darwin Gödel Machine is that you don’t need DeepMind’s infrastructure to run it. One markdown file. A single GPU. Short training runs. A setup any engineer can reproduce.

But here’s the part most people miss. With all of these systems, generating candidate changes is not the hard part. Models are already good at producing variations. The hard part is knowing whether a variation is actually better, or whether it just found a shortcut around your measurement.

One of the fastest ways to fool yourself with agents is to let them “improve” themselves under a weak feedback loop. The benchmark or your metrics goes up a little. The traces look cleaner. The system sounds more organized. And meanwhile it’s simply getting better at pleasing the metric you gave it, not at doing the thing you actually care about. I saw this happen in my own skill for helping make research for my articles. It was actually fine-tuned for Twitter to find more controversy rather than the sources I trusted more, so I had to put a hard constraint on this optimization in my automatic feedback improvement loop.

The drift between what your model optimizes for and what you really want, has a few names. One being reward hacking — the agent finds a way to score high without doing the right thing. Then we have benchmark overfitting — the system gets better at the test but not at the task. There’s also evaluator drift — the judge itself degrades over iterations. And model collapse — where recursive training on model-generated data erodes the distribution and wipes out useful signal over time. That last one was formally demonstrated in 2024 by Shumailov et al. and it’s a hard constraint on any loop that trains on its own outputs.

So, to recap, a self-improvement loop works when it keeps touching reality — through a verifier, a test suite, a simulator, human review (most important step!), grounded processes with metrics you control. It breaks when the loop starts mostly learning from its own artifacts and its own increasingly distorted feedback without much control from the builder.

Now, this is where people tend to split into two camps, and both are wrong. One hears all of this and says the loop is unstoppable, we’re heading toward AGI. The other says it’s just prompt tuning with better marketing.

The right mental model for engineers is not “self-improvement” in the dramatic AGI sense. It’s optimization under recursive feedback. That sounds less exciting, but it’s actually more useful, because we control it and we can do something about it.

And once you start thinking about this as engineering, the questions become very concrete. What is the system allowed to modify? How expensive is each iteration? How strong is the rejection mechanism? What memory does the loop keep? How do you avoid contaminating the next round with junk from the previous one? How do you detect when internal metrics are rising but usefulness is flattening?

And the answer, as always, is to start simple. Add complexity and extra steps only when forced. Something coding agents are quite bad at by default if you let them go, by the way.

Don’t try to automate the whole research lab on day one. Put one narrow layer into a loop where the signal is clean, like adding a step in your Claude skill file to digest everything you disagreed on during the exchange and update the skill to do better next time. Let the model generate. Let a verifier or yourself evaluate. Keep the winning versions. Log everything. Keep rollback easy. Watch for reward hacking. Watch for fake progress.

So yes, this “recursive self-improvement loop” thing is applicable today too. On your own skill files, your own prompts, your own agent pipelines. The improvements compound over time and, as models keep getting better, so do your instructions and tools and whole harness.

But this doesn’t erase the need for humans. It changes the job. The engineer’s role shifts from only writing solutions to designing evaluators, environments, permissions, and feedback loops that produce trustworthy solutions. The bottleneck moves from “ask the model for a good answer” to “build the conditions under which better versions reliably survive and worse versions reliably die.”

Honestly, with the current state of LLms and agents, that’s where the interesting work starts right now.

If you’ve already set up any kind of self-improvement loop in your own system, I want to hear about it. What layer did you automate first? Let me know in the comments.

Thanks for reading. If this was useful, you’ll probably love the YouTube channel, too! Check it out here and consider subscribing for more AI engineering content :)